Shopware 5 - parallel thumbnail generation after moving a Shopware 5 system to another server

We had a customer with 400k images and 1600k thumbnails that needed to move from an old hdd based server to a new ssd based one. The problem was that the old server was so slow that it already needed two days to count through all images, not speaking about coping them.

So we decided to copy only the original images and regenerate the Thumbnails. For copying the original images, I created a small console command that exports all paths of the original images that we need to copy: ExportImagesCommand.php

This filelist can be used with tar -T or rsync --files-from= options that tells these tools to only process the listed files. for the initial copy process, tar is highly recommended, as it just picks up the files listed without doing any "calculation" as rsync does.

SW5 default thumbnail generation would have taken 80 hours

... and would only use half of a core.

I was curious if I can speed up this generation process. The server itself has 32 cores available, so I copied the generate thumbnail command from sw5 and modified it to work in batches with an --batch parameter:

ParallelThumbnailGenerateCommand.php

To make it work, I just modified the Shopware core at engine/Shopware/Models/Media/Repository.php

I just changed the getAlbumMediaQuery function to:

public function getAlbumMediaQuery($albumId, $filter = null, $orderBy = null, $offset = null, $limit = null, $validTypes = null, $batch = null)

{

$builder = $this->getAlbumMediaQueryBuilder($albumId, $filter, $orderBy, $validTypes);

if (is_numeric($batch)) {

$builder->andWhere('MOD(media.id, 1000) = ?3');

$builder->setParameter(3, $batch);

}

if ($limit !== null) {

$builder->setFirstResult($offset)

->setMaxResults($limit);

}

return $builder->getQuery();

}

It is an optional parameter and won’t break anything. If you do a Shopware update, this would be gone, but as I was in the quest to speed up things for a one-time task, I just modified it in the core instead of finding a long-term solution.

What this function does is calculating a modulo of 1000 on the media id and compares it with the batch id. So we basically have 1000 batches to process until all work is done.

Now we only need to start all 1000 batches in a parallel manner. For doing so, I used the very helpful tool parallel - which is available in Linux:

It starts 64 batches in parallel and continues its work until all 1000 batches are finished.

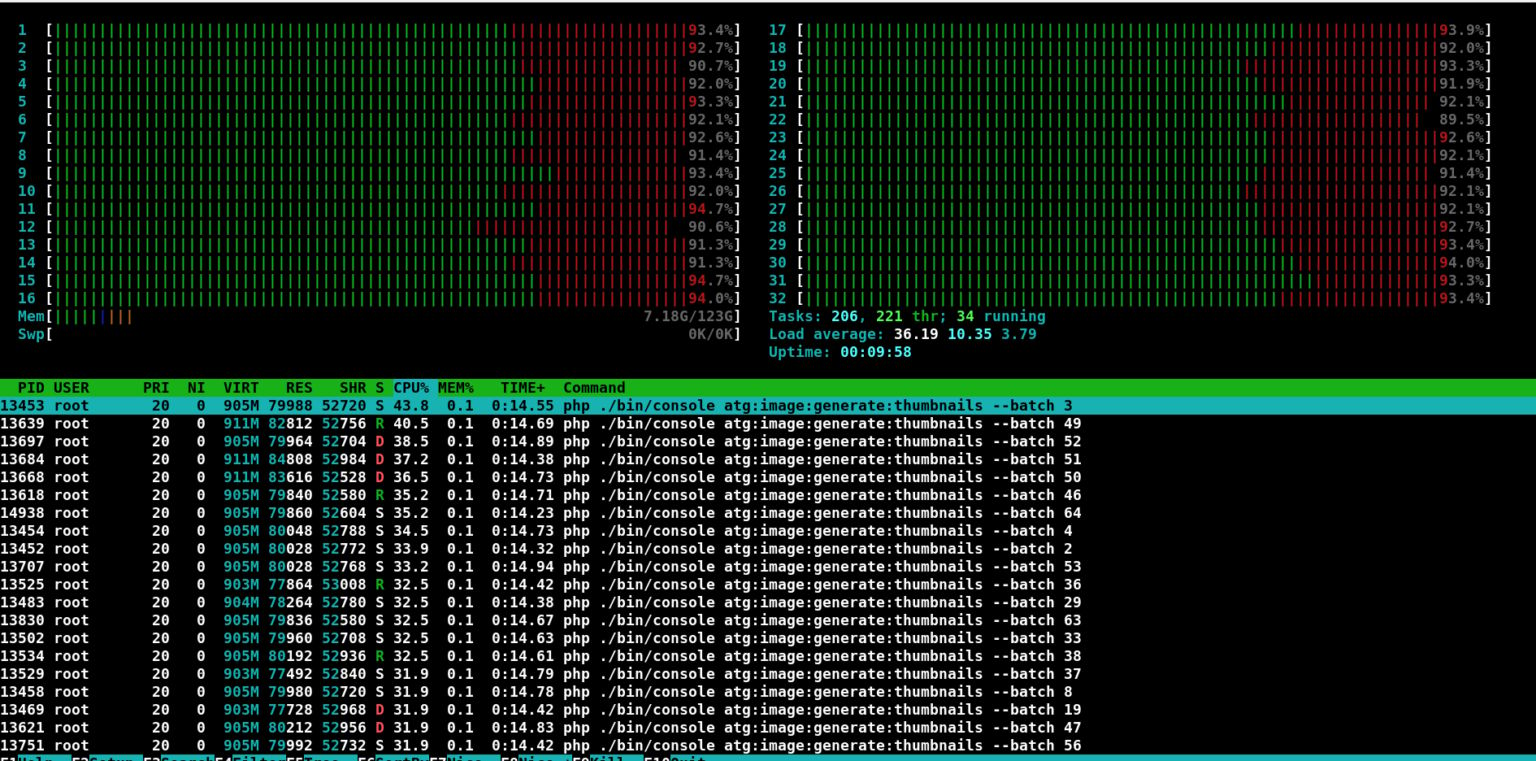

And this is how it looks at htop:

parallel -j 64 ./bin/console my:image:generate:thumbnails --batch ::: {0..999}